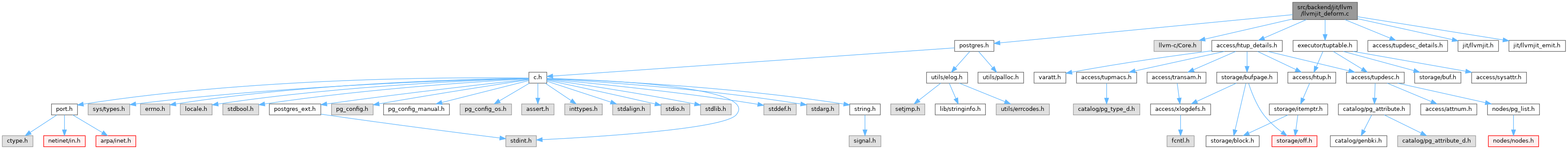

Definition at line 34 of file llvmjit_deform.c.

36{

38

42

45

49

58

60

68

70

76

78

80

81

83

84

86

87

89

91

92

95

96

100

103

105

106

107

108

109

111 {

113

114

115

116

117

118

119

120

121

122

123

124

129 }

130

131

132 {

134

136

138 param_types,

lengthof(param_types), 0);

139 }

144

155

157

164

166

168

169

171

173

176 "tts_values");

179 "tts_ISNULL");

181

183 {

185

190 "heapslot");

194 "tupleheader");

195 }

197 {

199

204 "minimalslot");

214 "tupleheader");

215 }

216 else

217 {

218

220 }

221

227 "tuple");

234 ""),

236 "t_bits");

242 "infomask1");

247 "infomask2");

248

249

256 "hasnulls");

257

258

262 "maxatt");

263

264

265

266

267

274 ""),

276

282 ""),

284 "v_tupdata_base");

285

286

287

288

289

290 {

292

296 }

297

298

300 {

313 }

314

315

316

317

318

319

320

321

322

324 {

325

329 }

330 else

331 {

334

335

340 ""),

343

344

346

355 }

356

358

360

361

362

363

364

365

366

367 if (true)

368 {

371

373 {

375

377 }

378 }

379 else

380 {

381

383 }

384

387

388

389

390

391

393 {

400

401

403

404

405

406

407

409 {

411 }

412

413

414

415

416

417

419 {

421 }

422 else

423 {

425

429 "heap_natts");

431 }

433

434

435

436

437

438

440 {

449

452

455 else

457

461

466 "attisnull");

467

469

471

473

474

478

482

485 }

486 else

487 {

488

492 }

494

495

496

497

498

499

500

501

502

503

506 {

507

508

509

510

511

512

513

514

515

517 {

521

522

524

526

532 "ispadbyte");

536 }

537 else

538 {

540 }

541

543

544

545 {

548

549

551

552

554

555

557

559

561 }

562

563

564

565

566

567

569 {

572 }

573

576 }

577 else

578 {

583 }

585

586

587

588

589

590

591

593 {

596 }

597

598

600 {

601

604 }

607 {

608

609

610

611

612

615 }

618 {

619

620

621

622

623

628 }

629 else

630 {

633 }

634

635

636

637 {

639

642 }

643

644

646

647

650

651

652

653

654

656 {

660

665

667 }

668 else

669 {

671

672

677 "attr_ptr");

679 }

680

681

683 {

685 }

686 else if (att->

attlen == -1)

687 {

692 "varsize_any");

695 }

696 else if (att->

attlen == -2)

697 {

702

704

705

707 }

708 else

709 {

712 }

713

715 {

718 }

719 else

720 {

722

725 }

726

727

728

729

730

732 {

733

735 }

736 else

737 {

739 }

740 }

741

742

743

745

746 {

748

753 }

754

756

758}

#define TYPEALIGN(ALIGNVAL, LEN)

#define Assert(condition)

const TupleTableSlotOps TTSOpsVirtual

const TupleTableSlotOps TTSOpsBufferHeapTuple

const TupleTableSlotOps TTSOpsHeapTuple

const TupleTableSlotOps TTSOpsMinimalTuple

#define palloc_array(type, count)

#define FIELDNO_HEAPTUPLEDATA_DATA

#define FIELDNO_HEAPTUPLEHEADERDATA_INFOMASK

#define FIELDNO_HEAPTUPLEHEADERDATA_HOFF

#define FIELDNO_HEAPTUPLEHEADERDATA_BITS

#define FIELDNO_HEAPTUPLEHEADERDATA_INFOMASK2

LLVMTypeRef StructMinimalTupleTableSlot

LLVMValueRef llvm_pg_func(LLVMModuleRef mod, const char *funcname)

char * llvm_expand_funcname(struct LLVMJitContext *context, const char *basename)

LLVMTypeRef llvm_pg_var_func_type(const char *varname)

LLVMTypeRef StructTupleTableSlot

LLVMTypeRef TypeStorageBool

LLVMTypeRef StructHeapTupleTableSlot

LLVMModuleRef llvm_mutable_module(LLVMJitContext *context)

LLVMValueRef AttributeTemplate

LLVMTypeRef StructHeapTupleHeaderData

LLVMTypeRef StructHeapTupleData

void llvm_copy_attributes(LLVMValueRef v_from, LLVMValueRef v_to)

LLVMTypeRef LLVMGetFunctionType(LLVMValueRef r)

#define ATTNULLABLE_VALID

static CompactAttribute * TupleDescCompactAttr(TupleDesc tupdesc, int i)

#define FIELDNO_HEAPTUPLETABLESLOT_OFF

#define FIELDNO_HEAPTUPLETABLESLOT_TUPLE

#define FIELDNO_TUPLETABLESLOT_ISNULL

#define FIELDNO_MINIMALTUPLETABLESLOT_TUPLE

#define FIELDNO_MINIMALTUPLETABLESLOT_OFF

#define FIELDNO_TUPLETABLESLOT_VALUES

#define FIELDNO_TUPLETABLESLOT_NVALID

References Assert, CompactAttribute::attalignby, CompactAttribute::attbyval, CompactAttribute::atthasmissing, CompactAttribute::attisdropped, CompactAttribute::attlen, CompactAttribute::attnullability, ATTNULLABLE_VALID, attnum, AttributeTemplate, b, fb(), FIELDNO_HEAPTUPLEDATA_DATA, FIELDNO_HEAPTUPLEHEADERDATA_BITS, FIELDNO_HEAPTUPLEHEADERDATA_HOFF, FIELDNO_HEAPTUPLEHEADERDATA_INFOMASK, FIELDNO_HEAPTUPLEHEADERDATA_INFOMASK2, FIELDNO_HEAPTUPLETABLESLOT_OFF, FIELDNO_HEAPTUPLETABLESLOT_TUPLE, FIELDNO_MINIMALTUPLETABLESLOT_OFF, FIELDNO_MINIMALTUPLETABLESLOT_TUPLE, FIELDNO_TUPLETABLESLOT_ISNULL, FIELDNO_TUPLETABLESLOT_NVALID, FIELDNO_TUPLETABLESLOT_VALUES, funcname, HEAP_HASNULL, HEAP_NATTS_MASK, lengthof, llvm_copy_attributes(), llvm_expand_funcname(), llvm_mutable_module(), llvm_pg_func(), llvm_pg_var_func_type(), LLVMGetFunctionType(), TupleDescData::natts, palloc_array, pg_unreachable, StructHeapTupleData, StructHeapTupleHeaderData, StructHeapTupleTableSlot, StructMinimalTupleTableSlot, StructTupleTableSlot, TTSOpsBufferHeapTuple, TTSOpsHeapTuple, TTSOpsMinimalTuple, TTSOpsVirtual, TupleDescCompactAttr(), TYPEALIGN, TypeDatum, TypeSizeT, and TypeStorageBool.

Referenced by llvm_compile_expr().