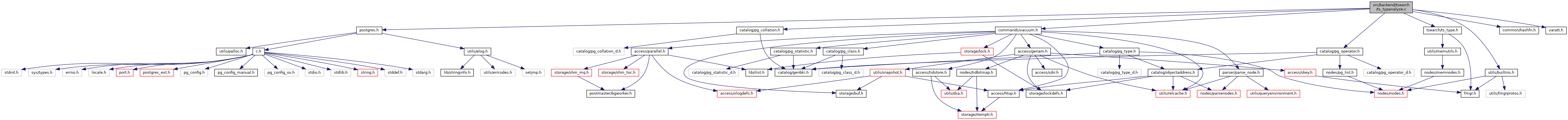

#include "postgres.h"#include "catalog/pg_collation.h"#include "catalog/pg_operator.h"#include "commands/vacuum.h"#include "common/hashfn.h"#include "tsearch/ts_type.h"#include "utils/builtins.h"#include "varatt.h"

Go to the source code of this file.

Data Structures | |

| struct | LexemeHashKey |

| struct | TrackItem |

Functions | |

| static void | compute_tsvector_stats (VacAttrStats *stats, AnalyzeAttrFetchFunc fetchfunc, int samplerows, double totalrows) |

| static void | prune_lexemes_hashtable (HTAB *lexemes_tab, int b_current) |

| static uint32 | lexeme_hash (const void *key, Size keysize) |

| static int | lexeme_match (const void *key1, const void *key2, Size keysize) |

| static int | lexeme_compare (const void *key1, const void *key2) |

| static int | trackitem_compare_frequencies_desc (const void *e1, const void *e2, void *arg) |

| static int | trackitem_compare_lexemes (const void *e1, const void *e2, void *arg) |

| Datum | ts_typanalyze (PG_FUNCTION_ARGS) |

Function Documentation

◆ compute_tsvector_stats()

|

static |

Definition at line 141 of file ts_typanalyze.c.

References VacAttrStats::anl_context, ARRPTR, Assert, VacAttrStats::attstattarget, cstring_to_text_with_len(), CurrentMemoryContext, DatumGetPointer(), DatumGetTSVector(), DEBUG3, TrackItem::delta, elog, fb(), TrackItem::frequency, HASH_COMPARE, HASH_CONTEXT, hash_create(), HASH_ELEM, HASH_ENTER, HASH_FUNCTION, hash_get_num_entries(), hash_key(), hash_search(), hash_seq_init(), hash_seq_search(), i, j, TrackItem::key, LexemeHashKey::lexeme, lexeme_hash(), lexeme_match(), Max, memcpy(), MemoryContextSwitchTo(), VacAttrStats::numnumbers, VacAttrStats::numvalues, palloc(), palloc_array, pfree(), PointerGetDatum, prune_lexemes_hashtable(), qsort_interruptible(), TSVectorData::size, VacAttrStats::stacoll, VacAttrStats::stadistinct, VacAttrStats::stakind, VacAttrStats::stanullfrac, VacAttrStats::stanumbers, VacAttrStats::staop, VacAttrStats::stats_valid, VacAttrStats::statypalign, VacAttrStats::statypbyval, VacAttrStats::statypid, VacAttrStats::statyplen, VacAttrStats::stavalues, VacAttrStats::stawidth, STRPTR, trackitem_compare_frequencies_desc(), trackitem_compare_lexemes(), TSVectorGetDatum(), vacuum_delay_point(), value, and VARSIZE_ANY().

Referenced by ts_typanalyze().

◆ lexeme_compare()

Definition at line 517 of file ts_typanalyze.c.

References fb(), LexemeHashKey::length, and LexemeHashKey::lexeme.

Referenced by lexeme_match(), and trackitem_compare_lexemes().

◆ lexeme_hash()

Definition at line 495 of file ts_typanalyze.c.

References DatumGetUInt32(), hash_any(), LexemeHashKey::length, and LexemeHashKey::lexeme.

Referenced by compute_tsvector_stats().

◆ lexeme_match()

Definition at line 507 of file ts_typanalyze.c.

References fb(), and lexeme_compare().

Referenced by compute_tsvector_stats().

◆ prune_lexemes_hashtable()

Definition at line 470 of file ts_typanalyze.c.

References TrackItem::delta, elog, ERROR, fb(), TrackItem::frequency, HASH_REMOVE, hash_search(), hash_seq_init(), hash_seq_search(), TrackItem::key, LexemeHashKey::lexeme, and pfree().

Referenced by compute_tsvector_stats().

◆ trackitem_compare_frequencies_desc()

|

static |

Definition at line 535 of file ts_typanalyze.c.

References fb(), and TrackItem::frequency.

Referenced by compute_tsvector_stats().

◆ trackitem_compare_lexemes()

Definition at line 547 of file ts_typanalyze.c.

References fb(), and lexeme_compare().

Referenced by compute_tsvector_stats().

◆ ts_typanalyze()

| Datum ts_typanalyze | ( | PG_FUNCTION_ARGS | ) |

Definition at line 58 of file ts_typanalyze.c.

References VacAttrStats::attstattarget, VacAttrStats::compute_stats, compute_tsvector_stats(), default_statistics_target, VacAttrStats::minrows, PG_GETARG_POINTER, and PG_RETURN_BOOL.