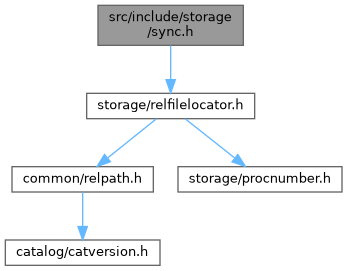

#include "storage/relfilelocator.h"

Go to the source code of this file.

Data Structures | |

| struct | FileTag |

Typedefs | |

| typedef enum SyncRequestType | SyncRequestType |

| typedef enum SyncRequestHandler | SyncRequestHandler |

| typedef struct FileTag | FileTag |

Enumerations | |

| enum | SyncRequestType { SYNC_REQUEST , SYNC_UNLINK_REQUEST , SYNC_FORGET_REQUEST , SYNC_FILTER_REQUEST } |

| enum | SyncRequestHandler { SYNC_HANDLER_MD = 0 , SYNC_HANDLER_CLOG , SYNC_HANDLER_COMMIT_TS , SYNC_HANDLER_MULTIXACT_OFFSET , SYNC_HANDLER_MULTIXACT_MEMBER , SYNC_HANDLER_NONE } |

Functions | |

| void | InitSync (void) |

| void | SyncPreCheckpoint (void) |

| void | SyncPostCheckpoint (void) |

| void | ProcessSyncRequests (void) |

| void | RememberSyncRequest (const FileTag *ftag, SyncRequestType type) |

| bool | RegisterSyncRequest (const FileTag *ftag, SyncRequestType type, bool retryOnError) |

Typedef Documentation

◆ FileTag

◆ SyncRequestHandler

◆ SyncRequestType

Enumeration Type Documentation

◆ SyncRequestHandler

| Enumerator | |

|---|---|

| SYNC_HANDLER_MD | |

| SYNC_HANDLER_CLOG | |

| SYNC_HANDLER_COMMIT_TS | |

| SYNC_HANDLER_MULTIXACT_OFFSET | |

| SYNC_HANDLER_MULTIXACT_MEMBER | |

| SYNC_HANDLER_NONE | |

◆ SyncRequestType

| Enumerator | |

|---|---|

| SYNC_REQUEST | |

| SYNC_UNLINK_REQUEST | |

| SYNC_FORGET_REQUEST | |

| SYNC_FILTER_REQUEST | |

Definition at line 23 of file sync.h.

Function Documentation

◆ InitSync()

Definition at line 125 of file sync.c.

References ALLOCSET_DEFAULT_SIZES, AllocSetContextCreate, AmCheckpointerProcess, fb(), HASH_BLOBS, HASH_CONTEXT, hash_create(), HASH_ELEM, IsUnderPostmaster, MemoryContextAllowInCriticalSection(), NIL, pendingOps, pendingOpsCxt, pendingUnlinks, and TopMemoryContext.

Referenced by BaseInit().

◆ ProcessSyncRequests()

Definition at line 287 of file sync.c.

References AbsorbSyncRequests(), Assert, PendingFsyncEntry::canceled, CheckpointStats, CheckpointStatsData::ckpt_agg_sync_time, CheckpointStatsData::ckpt_longest_sync, CheckpointStatsData::ckpt_sync_rels, PendingFsyncEntry::cycle_ctr, data_sync_elevel(), DEBUG1, elog, enableFsync, ereport, errcode_for_file_access(), errmsg, errmsg_internal(), ERROR, fb(), FILE_POSSIBLY_DELETED, FSYNCS_PER_ABSORB, FileTag::handler, HASH_REMOVE, hash_search(), hash_seq_init(), hash_seq_search(), INSTR_TIME_GET_MICROSEC, INSTR_TIME_SET_CURRENT, INSTR_TIME_SUBTRACT, log_checkpoints, longest(), MAXPGPATH, pendingOps, sync_cycle_ctr, SyncOps::sync_syncfiletag, syncsw, and PendingFsyncEntry::tag.

Referenced by CheckPointGuts().

◆ RegisterSyncRequest()

|

extern |

Definition at line 581 of file sync.c.

References fb(), ForwardSyncRequest(), pendingOps, RememberSyncRequest(), type, WaitLatch(), WL_EXIT_ON_PM_DEATH, and WL_TIMEOUT.

Referenced by ForgetDatabaseSyncRequests(), register_dirty_segment(), register_forget_request(), register_unlink_segment(), SlruInternalDeleteSegment(), and SlruPhysicalWritePage().

◆ RememberSyncRequest()

|

extern |

Definition at line 488 of file sync.c.

References Assert, PendingFsyncEntry::canceled, PendingUnlinkEntry::canceled, checkpoint_cycle_ctr, PendingFsyncEntry::cycle_ctr, PendingUnlinkEntry::cycle_ctr, fb(), FileTag::handler, HASH_ENTER, HASH_FIND, hash_search(), hash_seq_init(), hash_seq_search(), lappend(), lfirst, MemoryContextSwitchTo(), palloc_object, pendingOps, pendingOpsCxt, pendingUnlinks, sync_cycle_ctr, SyncOps::sync_filetagmatches, SYNC_FILTER_REQUEST, SYNC_FORGET_REQUEST, SYNC_REQUEST, SYNC_UNLINK_REQUEST, syncsw, PendingUnlinkEntry::tag, and type.

Referenced by AbsorbSyncRequests(), and RegisterSyncRequest().

◆ SyncPostCheckpoint()

Definition at line 203 of file sync.c.

References AbsorbSyncRequests(), PendingUnlinkEntry::canceled, checkpoint_cycle_ctr, PendingUnlinkEntry::cycle_ctr, ereport, errcode_for_file_access(), errmsg, fb(), FileTag::handler, i, lfirst, list_cell_number(), list_delete_first_n(), list_free_deep(), list_nth(), MAXPGPATH, NIL, pendingUnlinks, pfree(), SyncOps::sync_unlinkfiletag, syncsw, PendingUnlinkEntry::tag, UNLINKS_PER_ABSORB, and WARNING.

Referenced by CreateCheckPoint().

◆ SyncPreCheckpoint()

Definition at line 178 of file sync.c.

References AbsorbSyncRequests(), and checkpoint_cycle_ctr.

Referenced by CreateCheckPoint().