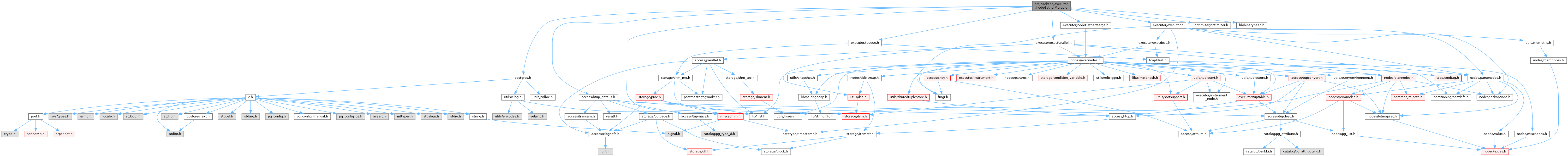

#include "postgres.h"#include "access/htup_details.h"#include "executor/executor.h"#include "executor/execParallel.h"#include "executor/nodeGatherMerge.h"#include "executor/tqueue.h"#include "lib/binaryheap.h"#include "miscadmin.h"#include "optimizer/optimizer.h"#include "utils/sortsupport.h"

Go to the source code of this file.

Data Structures | |

| struct | GMReaderTupleBuffer |

Macros | |

| #define | MAX_TUPLE_STORE 10 |

Typedefs | |

| typedef struct GMReaderTupleBuffer | GMReaderTupleBuffer |

| typedef int32 | SlotNumber |

Functions | |

| static TupleTableSlot * | ExecGatherMerge (PlanState *pstate) |

| static int32 | heap_compare_slots (Datum a, Datum b, void *arg) |

| static TupleTableSlot * | gather_merge_getnext (GatherMergeState *gm_state) |

| static MinimalTuple | gm_readnext_tuple (GatherMergeState *gm_state, int nreader, bool nowait, bool *done) |

| static void | ExecShutdownGatherMergeWorkers (GatherMergeState *node) |

| static void | gather_merge_setup (GatherMergeState *gm_state) |

| static void | gather_merge_init (GatherMergeState *gm_state) |

| static void | gather_merge_clear_tuples (GatherMergeState *gm_state) |

| static bool | gather_merge_readnext (GatherMergeState *gm_state, int reader, bool nowait) |

| static void | load_tuple_array (GatherMergeState *gm_state, int reader) |

| GatherMergeState * | ExecInitGatherMerge (GatherMerge *node, EState *estate, int eflags) |

| void | ExecEndGatherMerge (GatherMergeState *node) |

| void | ExecShutdownGatherMerge (GatherMergeState *node) |

| void | ExecReScanGatherMerge (GatherMergeState *node) |

Macro Definition Documentation

◆ MAX_TUPLE_STORE

| #define MAX_TUPLE_STORE 10 |

Definition at line 33 of file nodeGatherMerge.c.

Typedef Documentation

◆ GMReaderTupleBuffer

◆ SlotNumber

Definition at line 746 of file nodeGatherMerge.c.

Function Documentation

◆ ExecEndGatherMerge()

| void ExecEndGatherMerge | ( | GatherMergeState * | node | ) |

Definition at line 292 of file nodeGatherMerge.c.

References ExecEndNode(), ExecShutdownGatherMerge(), and outerPlanState.

Referenced by ExecEndNode().

◆ ExecGatherMerge()

|

static |

Definition at line 184 of file nodeGatherMerge.c.

References castNode, CHECK_FOR_INTERRUPTS, ExprContext::ecxt_outertuple, EState::es_parallel_workers_launched, EState::es_parallel_workers_to_launch, EState::es_use_parallel_mode, ExecInitParallelPlan(), ExecParallelCreateReaders(), ExecParallelReinitialize(), ExecProject(), fb(), gather_merge_getnext(), GatherMergeState::initialized, LaunchParallelWorkers(), memcpy(), GatherMergeState::need_to_scan_locally, GatherMergeState::nreaders, ParallelContext::nworkers_launched, GatherMergeState::nworkers_launched, ParallelContext::nworkers_to_launch, outerPlanState, palloc(), parallel_leader_participation, ParallelExecutorInfo::pcxt, GatherMergeState::pei, PlanState::plan, GatherMergeState::ps, PlanState::ps_ExprContext, PlanState::ps_ProjInfo, ParallelExecutorInfo::reader, GatherMergeState::reader, ResetExprContext, PlanState::state, TupIsNull, and GatherMergeState::tuples_needed.

Referenced by ExecInitGatherMerge().

◆ ExecInitGatherMerge()

| GatherMergeState * ExecInitGatherMerge | ( | GatherMerge * | node, |

| EState * | estate, | ||

| int | eflags | ||

| ) |

Definition at line 69 of file nodeGatherMerge.c.

References Assert, CurrentMemoryContext, ExecAssignExprContext(), ExecConditionalAssignProjectionInfo(), ExecGatherMerge(), ExecGetResultType(), ExecInitNode(), ExecInitResultTypeTL(), fb(), gather_merge_setup(), i, innerPlan, makeNode, GatherMerge::numCols, OUTER_VAR, outerPlan, outerPlanState, palloc0_array, GatherMerge::plan, PrepareSortSupportFromOrderingOp(), Plan::qual, and SortSupportData::ssup_cxt.

Referenced by ExecInitNode().

◆ ExecReScanGatherMerge()

| void ExecReScanGatherMerge | ( | GatherMergeState * | node | ) |

Definition at line 342 of file nodeGatherMerge.c.

References bms_add_member(), ExecReScan(), ExecShutdownGatherMergeWorkers(), fb(), gather_merge_clear_tuples(), GatherMergeState::gm_initialized, GatherMergeState::initialized, outerPlan, outerPlanState, PlanState::plan, and GatherMergeState::ps.

Referenced by ExecReScan().

◆ ExecShutdownGatherMerge()

| void ExecShutdownGatherMerge | ( | GatherMergeState * | node | ) |

Definition at line 305 of file nodeGatherMerge.c.

References ExecParallelCleanup(), ExecShutdownGatherMergeWorkers(), fb(), and GatherMergeState::pei.

Referenced by ExecEndGatherMerge(), and ExecShutdownNode_walker().

◆ ExecShutdownGatherMergeWorkers()

|

static |

Definition at line 324 of file nodeGatherMerge.c.

References ExecParallelFinish(), fb(), GatherMergeState::pei, pfree(), and GatherMergeState::reader.

Referenced by ExecReScanGatherMerge(), and ExecShutdownGatherMerge().

◆ gather_merge_clear_tuples()

|

static |

Definition at line 526 of file nodeGatherMerge.c.

References ExecClearTuple(), fb(), i, and pfree().

Referenced by ExecReScanGatherMerge(), and gather_merge_getnext().

◆ gather_merge_getnext()

|

static |

Definition at line 547 of file nodeGatherMerge.c.

References binaryheap_empty, binaryheap_first(), binaryheap_remove_first(), binaryheap_replace_first(), DatumGetInt32(), fb(), gather_merge_clear_tuples(), gather_merge_init(), gather_merge_readnext(), i, and Int32GetDatum().

Referenced by ExecGatherMerge().

◆ gather_merge_init()

|

static |

Definition at line 443 of file nodeGatherMerge.c.

References Assert, binaryheap_add_unordered(), binaryheap_build(), binaryheap_reset(), castNode, CHECK_FOR_INTERRUPTS, ExecClearTuple(), fb(), gather_merge_readnext(), i, Int32GetDatum(), load_tuple_array(), GatherMerge::plan, and TupIsNull.

Referenced by gather_merge_getnext().

◆ gather_merge_readnext()

|

static |

Definition at line 636 of file nodeGatherMerge.c.

References Assert, EState::es_query_dsa, ExecProcNode(), ExecStoreMinimalTuple(), fb(), gm_readnext_tuple(), load_tuple_array(), outerPlan, outerPlanState, and TupIsNull.

Referenced by gather_merge_getnext(), and gather_merge_init().

◆ gather_merge_setup()

|

static |

Definition at line 396 of file nodeGatherMerge.c.

References binaryheap_allocate(), castNode, ExecInitExtraTupleSlot(), fb(), heap_compare_slots(), i, MAX_TUPLE_STORE, palloc0(), palloc0_array, GatherMerge::plan, and TTSOpsMinimalTuple.

Referenced by ExecInitGatherMerge().

◆ gm_readnext_tuple()

|

static |

Definition at line 714 of file nodeGatherMerge.c.

References CHECK_FOR_INTERRUPTS, fb(), heap_copy_minimal_tuple(), and TupleQueueReaderNext().

Referenced by gather_merge_readnext(), and load_tuple_array().

◆ heap_compare_slots()

Definition at line 752 of file nodeGatherMerge.c.

References a, ApplySortComparator(), arg, Assert, b, compare(), DatumGetInt32(), fb(), GatherMergeState::gm_nkeys, GatherMergeState::gm_slots, GatherMergeState::gm_sortkeys, INVERT_COMPARE_RESULT, s1, s2, slot_getattr(), SortSupportData::ssup_attno, and TupIsNull.

Referenced by gather_merge_setup().

◆ load_tuple_array()

|

static |

Definition at line 597 of file nodeGatherMerge.c.

References fb(), gm_readnext_tuple(), i, MAX_TUPLE_STORE, and GMReaderTupleBuffer::nTuples.

Referenced by gather_merge_init(), and gather_merge_readnext().